In recent years, Apache Kafka has quietly become a backbone for many large-scale systems, from e-commerce giants tracking user behaviour to financial services streaming transaction data in real-time. But for many testers and developers, Kafka can still feel like an intimidating black box: logs? topics? partitions? What exactly is this thing?

At its core, Kafka is a distributed event streaming platform. That phrase can sound a bit buzzword-heavy, but it essentially means Kafka lets different parts of a system talk to each other through a stream of data, reliably, at scale, and in real-time. It’s not just another messaging queue. Kafka was designed to handle high-throughput, fault-tolerant, and time-sensitive workloads that traditional tools often struggle with.

So, why should you care?

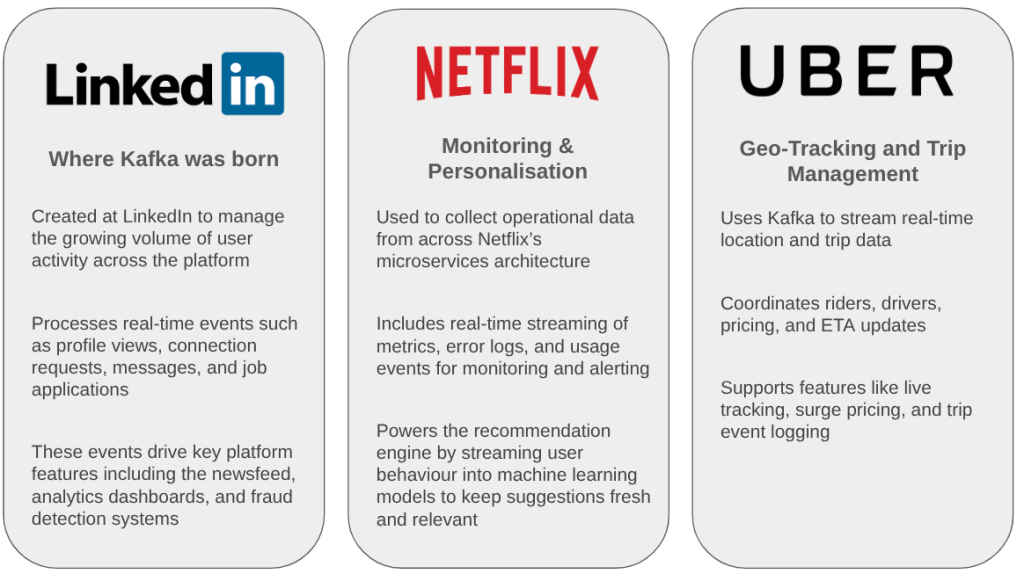

Because Kafka is everywhere, it’s under the hood at companies like LinkedIn, Netflix, Uber, and Spotify. It’s powering event-driven architectures, microservices, data lakes, and real-time analytics pipelines. And with all that power comes complexity, especially when it comes to testing. If you’re in or around testing, understanding Kafka isn’t optional anymore; it’s essential.

Let’s start with the basics.

What Problem Does Kafka Solve?

To understand Kafka’s purpose, think about how modern systems communicate. Let’s say a user places an order on an online store. That single action might need to trigger:

- An update to the inventory

- A confirmation email to the user

- A billing process with a third-party service

- A log for analytics or fraud detection

In a traditional setup, you’d probably hardwire each of these components to talk to each other directly, through REST APIs, database calls, or background jobs. That works… at first. But over time, the system becomes tightly coupled, harder to scale, and painful to maintain. Every new feature risks breaking three others.

Kafka steps in as a decoupling layer. It acts like a central pipeline where systems publish and subscribe to events, loosely, independently, and asynchronously. In the case above, the “OrderPlaced” event would go to Kafka once. All the other services would listen for it and react in their own time, without knowing about each other at all.

Here’s the core problem Kafka solves:

How do we reliably move and process large volumes of data between services in real time, without coupling them together?

Kafka isn’t just about speed. It’s about:

- Durability – messages don’t disappear if something goes wrong.

- Scalability – it can handle millions of events per second.

- Flexibility – new consumers (like a new fraud detection service) can plug in without touching existing code.

In essence, Kafka lets your system grow without turning into a tangled mess. And for testers? That opens a whole new set of concerns, which we’ll get to later.

How Kafka Is Being Used in the Real World

Kafka isn’t just a theoretical tool or something you use to tick a tech buzzword box. It’s deeply embedded in the infrastructure of companies you use every day, whether you’re ordering food, watching a film, or transferring money between accounts.

Here are just a few examples of how Kafka is used in practice:

In all these cases, Kafka is solving the same kind of problem: moving high volumes of critical data across different parts of the system, instantly and reliably. And that’s exactly what makes it so powerful — and tricky to test.

Why Testing Kafka Matters – and Why It’s Different

Kafka isn’t just a queue you can fire-and-forget. It’s often the spine of a system, a critical layer where data flows, events trigger business logic, and downstream services depend on things arriving in the right format, at the right time.

If something goes wrong here, you might not find out until hours later… when your analytics are wrong, your emails never went out, or your users are complaining about “weird” behaviour. Testing can’t be an afterthought.

So what makes testing Kafka different?

Most of us are used to testing APIs or services with predictable inputs and outputs. Kafka adds a layer of complexity:

- Asynchronous behaviour – Events are published and consumed on different timelines. Tests can’t just check “Did X return Y?”

- Distributed systems – Multiple producers and consumers may be reading/writing at once. Race conditions and ordering issues are real concerns.

- Stateful event streams – It’s not just about single messages, but about sequences over time, sometimes involving replays or reprocessing.

- Schemas and contracts – Small changes in event structure (like a missing field) can silently break downstream systems.

This means testing needs to shift left, and get creative.

Approaches to Testing Kafka-Integrated Systems

Here are some of the practical ways teams test Kafka-based workflows:

Unit Testing with Mocks

For basic producer or consumer logic, you can mock Kafka interfaces to test message structure, transformation, and logic. Tools like spring-kafka-test Or mocking libraries make this fairly straightforward. As testers we will not be writing unit tests but it’s a good idea to get into the habit of reviewing them. With something that can be as complicated as Kafka, the earlier we as testers get involved the better.

Integration Testing with Embedded Kafka

As testers, one of the big challenges with Kafka is that it’s not lightweight. Running a full broker locally or in CI just to test message flows can feel like overkill, and in some cases, it is. That’s where Embedded Kafka comes in.

Embedded Kafka is exactly what it sounds like: a Kafka broker spun up within your test process, typically in memory. It’s not a mock, it’s the real thing, just packaged and controlled within your test environment. This allows you to test actual Kafka interactions (not just your logic) without deploying infrastructure.

so why not just use a mock?

The table below gives more details regarding the use of mocks vs embedded kafka

| Feature | Mock Kafka | Embedded Kafka |

|---|---|---|

| Speed | Very fast | Slower, but acceptable |

| Infrastructure required | None | In-memory or containerized |

| Real Kafka behaviour | No | Yes |

| Schema & serialization | Simulated | Real |

| Test granularity | Unit tests | Integration/system tests |

| CI-friendly | Easy | Needs setup |

Contract Testing

For teams using Kafka, consumer-driven contract testing with tools like Pact offers a way to catch integration issues early, without relying on a running Kafka cluster. The idea is simple: the consumer defines the expected message structure, and the producer verifies it can emit messages that match. Pact treats Kafka events like contracts, allowing you to validate message formats in CI before they hit production. It’s especially useful when services evolve independently, helping avoid silent failures due to field changes, missing values, or schema drift, all without testing the actual message delivery.

End-to-End Testing in Staging

End-to-end testing in a Kafka-based system focuses on validating that events trigger the right outcomes across services, not just that messages are published. Instead of inspecting Kafka directly, testers simulate real actions (like placing an order) and assert that downstream effects occur, such as a database update or a confirmation email. Since Kafka is asynchronous, tests often wait for the system to settle before verifying results. For example, triggering a UserRegistered event might involve creating a test user via an API, then confirming that a welcome email was sent, a user profile was created in the database, and an audit log entry was written, verifying that the full event-driven chain behaves as expected.

Monitoring as a Safety Net

Even with all of the above, real-world failures happen. Strong observability (e.g., lag monitoring, dead letter queues, event tracing) becomes a key part of your test strategy.

A shift in mindset

Testing Kafka isn’t just about checking whether something “works”, it’s about ensuring confidence at scale, across time and complexity. And as testers, our job isn’t just to click through happy paths, it’s to build that confidence.

Kafka isn’t a silver bullet. But when you’re working in systems that need to move fast, scale hard, and stay resilient, it can be a game changer. It shines in situations where traditional point-to-point communication or polling-based approaches just can’t keep up.

But with great power comes… well, complexity. Especially for testers.

Understanding the flow of events, validating contracts, tracing side effects, and anticipating failure scenarios all become part of the testing mindset. Kafka shifts the challenge from “Is this endpoint working?” to “Are we confident the entire pipeline behaves correctly, even when things get weird?”

You don’t have to become a distributed systems expert overnight. But getting familiar with how Kafka works, and how it fails, puts you in a much better position to test, build, and contribute meaningfully in modern software teams.

Wrapping Up

If you’re a tester, learning Kafka doesn’t mean becoming a DevOps engineer or a stream processing expert. It means asking good questions, spotting assumptions, and treating data as a first-class citizen. Kafka changes how systems behave, and how we test them needs to evolve too.